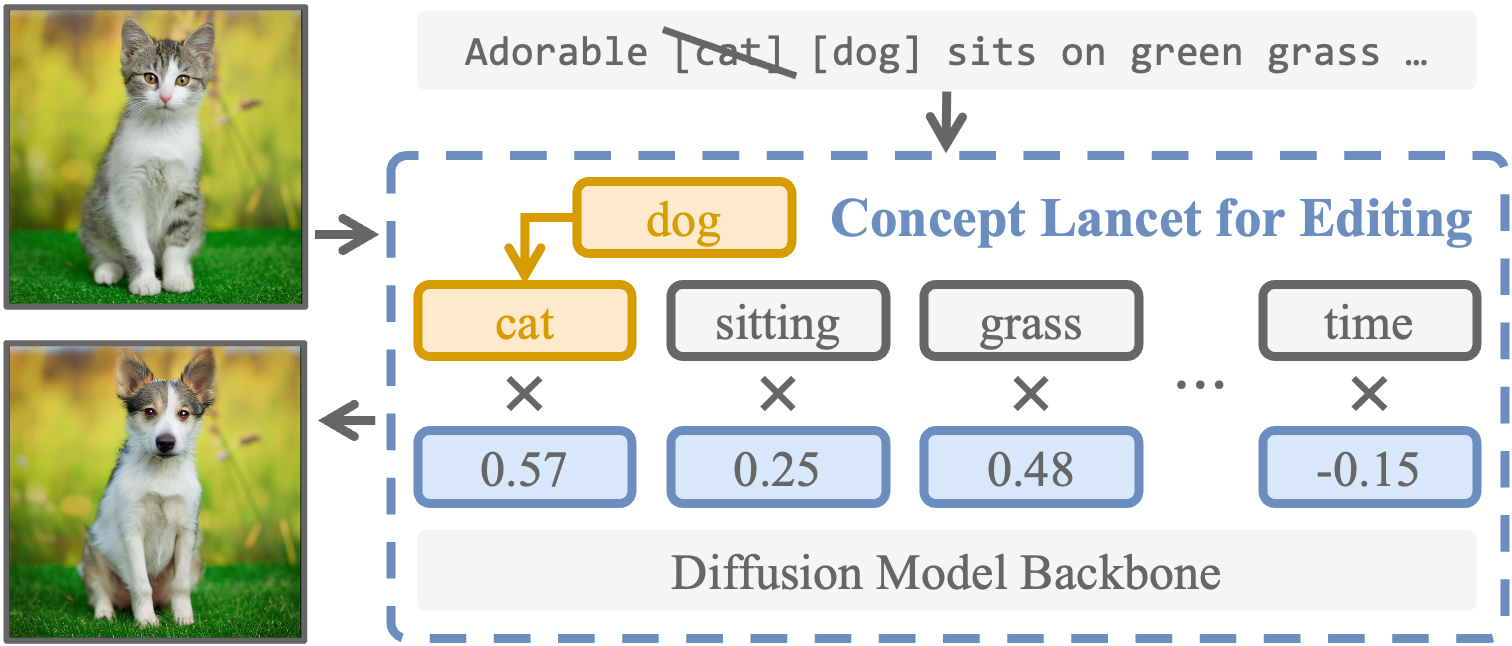

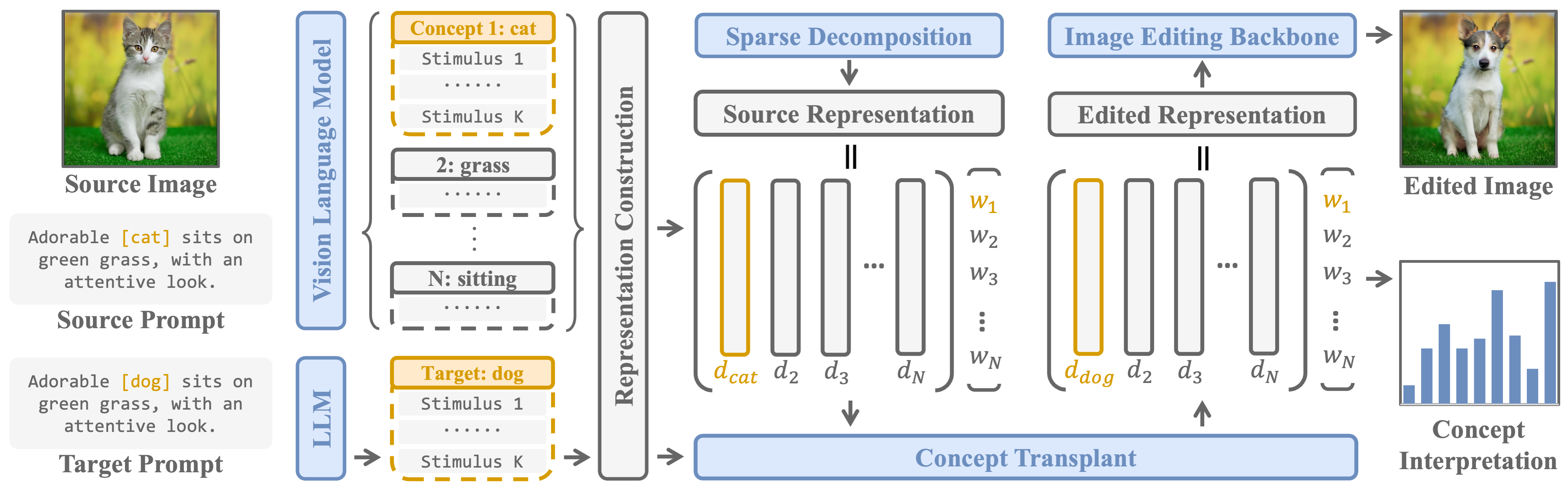

Diffusion models are widely used for image editing tasks. Existing editing methods often design a representation manipulation procedure by curating an edit direction in the text embedding or score space. However, such a procedure faces a key challenge: overestimating the edit strength harms visual consistency while underestimating it fails the editing task. Notably, each source image may require a different editing strength, and it is costly to search for an appropriate strength via trial-and-error. To address this challenge, we propose Concept Lancet (CoLan), a zero-shot plug-and-play framework for principled representation manipulation in diffusion-based image editing. At inference time, we decompose the source input in the latent (text embedding or diffusion score) space as a sparse linear combination of the representations of the collected visual concepts. This allows us to accurately estimate the presence of concepts in each image, which informs the edit. Based on the editing task (replace/add/remove), we perform a customized concept transplant process to impose the corresponding editing direction. To sufficiently model the concept space, we curate a conceptual representation dataset, CoLan-150K, which contains diverse descriptions and scenarios of visual terms and phrases for the latent dictionary. Experiments on multiple diffusion-based image editing baselines show that methods equipped with CoLan achieve state-of-the-art performance in editing effectiveness and consistency preservation.

The CoLan framework starts with a source image and prompt, and a vision-language model extracts visual concepts (e.g., cat, grass, sitting) to construct a concept dictionary. The source representation is then decomposed along this dictionary, and the target concept (dog) is transplanted to replace the corresponding atom to achieve precise edits. Finally, the image editing backbone generates an edited image where the desired target concept is incorporated without disrupting other visual elements. More details in the paper.

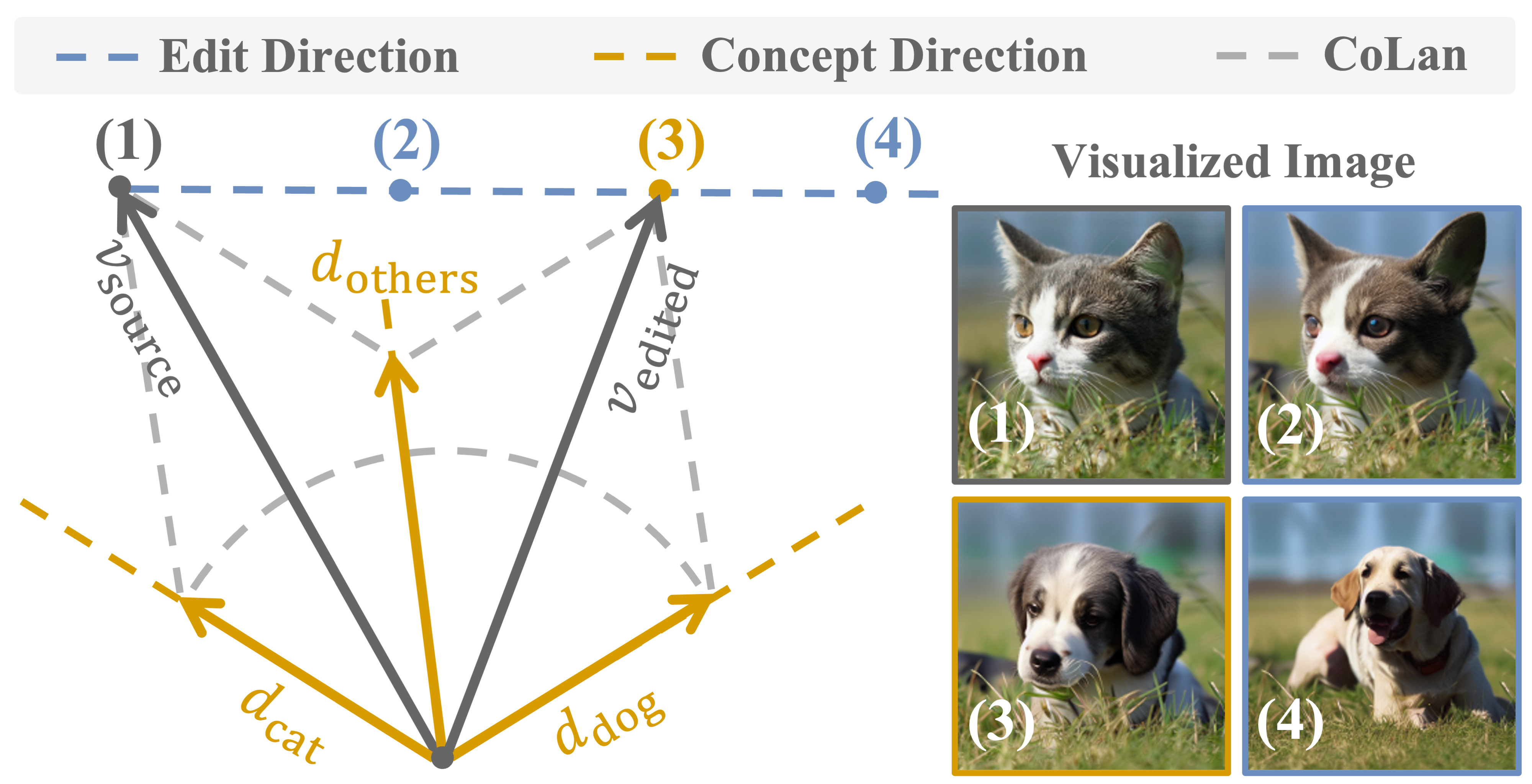

Representation manipulation in diffusion models involves adding an accurate magnitude of edit direction (e.g., Image (3)) by CoLan to the latent source representation.

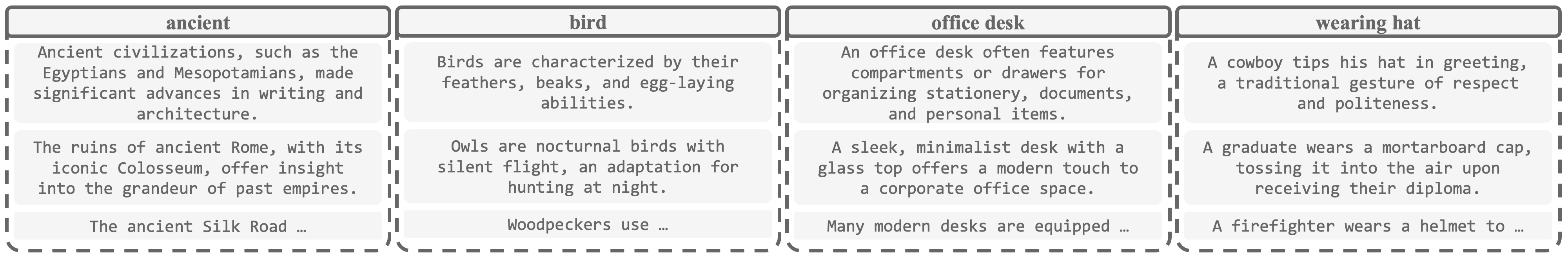

Samples of the concept stimuli from CoLan-150K. Compared to existing collections of conceptual representation for diffusion-based editing, our dataset represents a significant scaling up and provides richer and more diverse representations for each concept.

Visual comparisons of CoLan in the text embedding space. Texts in gray are the original captions of the source images from PIE-Bench, and texts in blue are the corresponding edit task (replace, add, remove). [x] represents the concepts of interest, and [] represents the null concept.

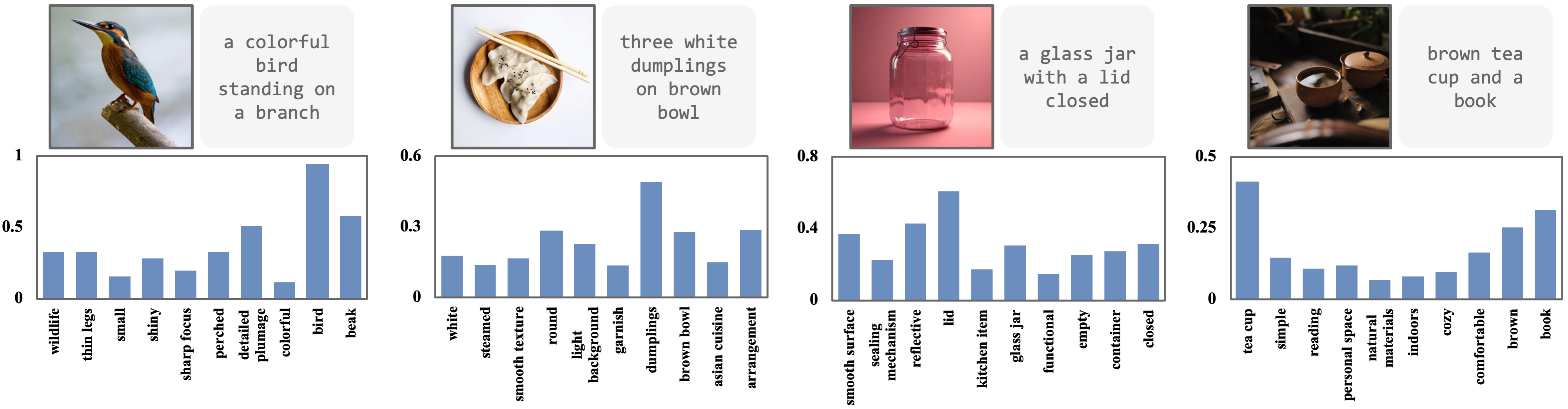

The histograms of solved magnitudes of the concept atoms in CoLan decomposition (score space). The histogram includes the concepts whose CoLan coefficients have the top 10 largest magnitudes.

If you find our work helpful, please consider citing our paper:

@inproceedings{luo2025concept,

title={Concept Lancet: Image Editing with Compositional Representation Transplant},

author={Jinqi Luo and Tianjiao Ding and Kwan Ho Ryan Chan and Hancheng Min and Chris Callison-Burch and Rene Vidal},

booktitle={CVPR},

year={2025}

}